Bringing agentic AI to silicon development

Hardware development teams face a unique challenge in the age of AI: while software engineers are rapidly adopting AI coding assistants, hardware designers often feel left behind. Why? Because of the semiconductor industry's unique constraints:

Proprietary languages – Most production Verilog code is confidential

Commercial toolchains – EDA (Electronic Design Automation) tools are closed systems that LLMs cannot directly access

Infrastructure complexity – Knowledge is scattered across multiple disconnected systems

These constraints make it difficult for generic AI solutions to operate effectively in hardware development environments. At Annapurna Labs, part of Amazon, we believe the answer lies not in simply building better foundation models, but in developing agentic AI architectures that can bridge the gap between general-purpose LLMs and the specialized world of hardware development. We are applying the agentic paradigm to the development of the next generation of Trainium chips.

In this blog, we describe what becomes possible when AI agents (such as Kiro) understand and integrate with your hardware development workflow.

Why generic LLMs fall short

Modern LLMs can generate reasonable Verilog code for hardware designs, but not as high quality as Python or C code, since most industry-level Verilog code is confidential. Even if you managed to generate good code, how do you know it actually works? In software, you can run Python code instantly and see results. In hardware, the story is totally different.

An LLM can write Verilog, but it cannot compile it with your commercial EDA tools using your team's customized settings and build flows, interpret compilation errors that require deep tool-specific knowledge, analyze waveforms or coverage reports to understand functional correctness, or iterate based on actual tool feedback rather than hallucinated assumptions. Even if we could somehow teach an LLM all these skills, there's another problem: commercial EDA tools have significant cold-start and run times. Launching a simulator, loading libraries, and running even basic sanity checks can take minutes to hours. This creates an unacceptably slow feedback loop—the AI generates code, waits 10 minutes for compilation results, then attempts another iteration. What should be an interactive conversation becomes a slow, frustrating back-and-forth.

But verification requires more than compiling code or checking property, and bridging that gap is difficult because the hardware team's knowledge is scattered across disconnected systems. When a verification engineer asks "Why is this testbench (link) failing?", finding the answer requires navigating:

Design and architecture specifications spread across multiple documents

Source code distributed across numerous Git repositories

Regression logs buried in centralized servers, large in size, which may not fit in 1M context windows

Design reviews and decisions hidden in ticket threads

A generic AI assistant can't solve this. It can only answer questions based on what you explicitly paste into the conversation. What hardware teams actually need is an AI agent that knows where to look—one that can intelligently search your spec docs, traverse your Git history, parse massive regression logs, and synthesize information across your entire infrastructure. Today’s state of the art LLMs are smart enough, but they're disconnected from the tools and context that hardware engineers rely on every day.

The agentic AI solution

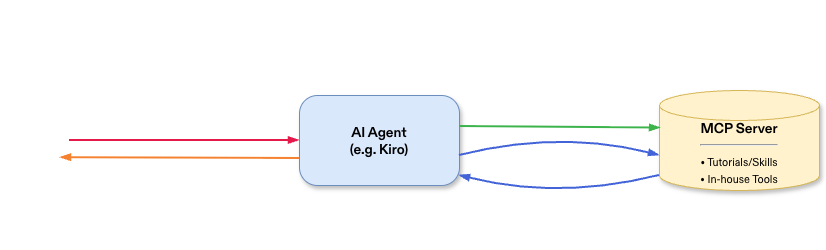

Agentic architectures solve this by making LLMs capable, not just smart. Instead of teaching AI more about hardware, we provide agents like Kiro with the tools to interact with your actual infrastructure. In this approach, Model Context Protocol (MCP) acts as a bridge between AI and our hardware development systems. The AI agent discovers these tools and chains them together to accomplish complex tasks. This orchestration is really powerful, and has transformed our development process.

The diagram below shows how an AI agent interacts with hardware-domain MCP servers to complete a task.

Hardware development has two major phases: frontend (specification, RTL design, and verification) and backend (physical design, fabrication, packaging, and post-silicon validation). This post focuses on frontend workflows, similar to SDLC (Software Development Life Cycle). Let's see how this works in practice with three concrete examples:

Fast-feedback loops with open-source tools. When an engineer asks Kiro to generate a new module implementation, the agent doesn't just generate code and stop. Instead, it can leverage open-source tools like Slang (a fast Verilog compiler) and EBMC (a bounded model checker) to provide near-instant feedback. Rather than waiting minutes for a commercial simulator to cold-start, the agent gets compilation results in seconds, catches basic errors immediately, and iterates rapidly. Only when the code passes these quick sanity checks does it move to full commercial tool verification. This multi-tier validation dramatically improves both speed and code quality.

Intelligent compilation with in-house tools. We have specific build flows, custom scripts, and institutional knowledge about how modules should be compiled. Rather than expecting an LLM to figure this out (which it can't), we provide a detailed instruction markdown tutorial along with in-house tools as MCP servers. When the Kiro agent needs to compile a module, it reads the tutorial and calls your actual build infrastructure—the same scripts your engineers use. Similarly, when analyzing regression logs that might contain millions of lines, the MCP server includes built-in analysis functions that extract error information, stack traces, and failure patterns. The agent receives structured insights, not raw gigabyte-sized files that would overflow any context window.

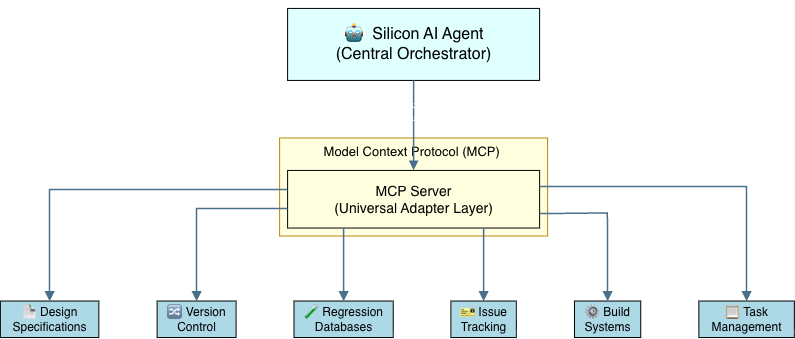

Unified access to scattered resources with an agent. We've deployed our unified Silicon AI agent atop an MCP server that provides seamless access to the team's distributed knowledge base including spec docs, Git repositories, regression databases, and ticketing systems. When you ask Kiro with Silicon AI agent support "Why did the DMA module fail the smoke test?", the agent will possibly do the following:

Query the regression database for the latest DMA smoke test run, extract the failure signature

Search Git for recent changes to the DMA module and related testbench code

Pull the DMA specification to understand expected behavior and identify the likely issue

File a ticket with the failure details, root cause hypothesis, and suggested fixes

The entire process takes less than 1 minute, pulling context from five different systems that would take an engineer ~20 minutes to navigate manually.

The magic isn't in the LLM, but in the orchestration. The AI agent intelligently chooses which tools to use, in what sequence, based on the task at hand. It knows when to use fast open-source tools for quick iteration, when to invoke our in-house build infrastructure for accurate compilation, and when to query distributed resources for debugging context.

Real-world impact

When these agentic capabilities are integrated into a product like Kiro, hardware teams see transformative results.

6x faster testbench development

Before Kiro's tool integration, generating a local testbench for a mid-size, syntactically correct module (~10 different blocks) would take a generic LLM agent 30 minutes on average. The process involved repeated back-and-forth: the engineer describes requirements, the AI generates code, compilation fails due to missing files or incorrect paths, the AI tries again, and the cycle repeats.

After integrating in-house filelist generation tools, the same task takes 5 minutes on average—a 6x speedup validated across multiple blocks and repeated trials. The time savings are even greater for more complex modules, and less capable LLM models benefit even more dramatically. The difference? The AI agent now intelligently invokes the correct filelist generation tools, leveraging your existing build infrastructure instead of guessing at file dependencies.

Beyond the speed-up, we’re also getting fewer, higher-quality iterations. The AI agent has access to powerful, battle-tested tools that generate correct code on the first try, rather than iterating endlessly on fundamentally flawed approaches. One engineer on our team captured it perfectly: "Before, I'd generate a testbench with an AI assistant and spend over 20 minutes providing feedback to fix basic errors—missing includes, wrong module paths, incorrect parameters. Now, with Kiro's in-house tool integration, I can get something that compiles and runs immediately. I can focus my time on the actual verification challenge, not fighting the build system."

Strong adoption validates real impact

Over the past 30 days, over 80% of engineers across the hardware team have used the team-specific AI assistant, with over 30% engineers using it daily—impressive for a hardware team where engineers are notoriously skeptical of new tools.

The breadth of use cases speaks volumes.

"Debug this regression failure at link xxx" – The agent analyzes logs, cross-references recent code changes in Git, and identifies that a specific change of previous commit caused the regression failure. It then checks the team's ticketing system for existing reports of the same issue—and if none exist, automatically files a new ticket with the root cause analysis.

"How do I set up the formal verification flow for this new module?" – Instead of asking senior engineers, the agent searches our document sharing system for the exact setup guide and generates the initial FV script based on the instructions.

"Are there enough simulation licenses for my regression run?" – The agent queries the license server in real time, shows per-server availability and current utilization, identifies which users are holding the most licenses, and flags any servers at capacity—giving engineers instant visibility that previously required checking multiple dashboards.

"What's blocking the tape-out milestone?" – The agent performs analysis across multiple dashboards, correlates open tickets, checks regression pass rates, and synthesizes a project status summary that would take a human hours to compile.

These are the questions engineers ask dozens of times per day, which previously required context-switching, manual searching, and institutional knowledge. Now they get answered in seconds, with citations and actionable next steps.

Conclusion

The hardware industry does not just need LLMs that generate Verilog from scratch. What is far more important is supporting the entire hardware development lifecycle. Rather than focusing solely on code generation, the real opportunity is to use AI to assist across the full process—from specifications and design exploration to verification, validation, and developer productivity. This shift toward AI-assisted hardware development workflows is both more practical today and ultimately more impactful for the industry.

Generic AI coding assistants have been improving, but they will continue to be limited by their disconnection from hardware development workflows. The future belongs to domain-specific agentic systems that understand not just the syntax of Verilog, but the entire hardware development ecosystem: your build flows, verification methodologies, debugging practices, and your team's accumulated knowledge. Agents like Kiro—augmented with capabilities such as Steering, Powers, and custom MCP servers tailored for hardware workflows—demonstrate that this future is already taking shape today.

Key takeaways for hardware teams

Generic AI assistants currently fall short for hardware workflows due to the knowledge gap, tool opacity, and infrastructure fragmentation

Agentic AI with MCP changes the equation by giving LLMs structured access to your team's actual resources and tools

Real impact comes from integration, not isolation, where AI agents can compile your Verilog code, query your specifications, and analyze your logs to deliver real productivity gains

The technology is ready now: Agents like Kiro demonstrate that agentic AI for hardware isn't a future vision, but something you can deploy today

The path forward

The question facing hardware teams isn't "Will AI transform our workflows?" It's "Are we building the infrastructure that lets AI transform our workflows?" The tools you create today will determine how much value your team extracts from AI tomorrow.

We recommend you start small: pick one pain point (testbench generation, regression triage, specification search), build one tool that addresses it, and expose that tool to an AI agent. You'll quickly discover that the bottleneck wasn't AI intelligence or knowledge, but access to your world.