Opus 4.7 is now available in Kiro

Nima Kaviani

Product

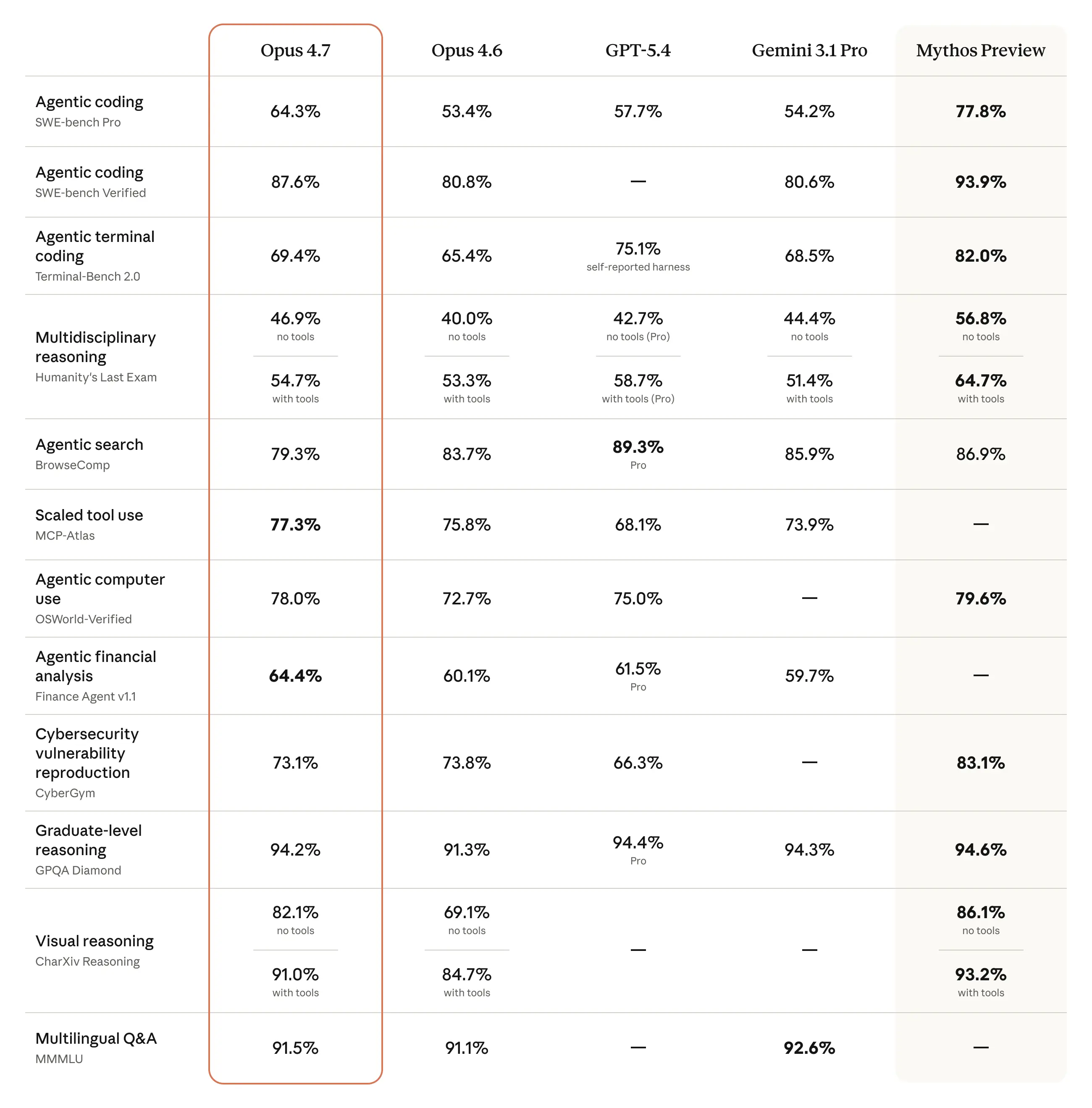

Starting today, Claude Opus 4.7 is rolling out in the Kiro IDE and CLI. Opus 4.7 is Anthropic's latest and most capable Opus model, a direct upgrade from Opus 4.6, with stronger coding performance on complex, long-running tasks.

Opus 4.7 resolves more production tasks than its predecessor and follows complex instructions more precisely across longer sessions. It handles multi-step implementations that span multiple files and tools, verifies its own outputs before returning results, and holds closer to what you asked for with stronger follow-through.

Those improvements carry into Kiro's spec-driven development. We see Opus 4.7 as the best model fit for carrying detailed specs into implementation with higher fidelity across larger codebases and broader changes. In workflows that move between planning, tool use, execution, and review, it holds its thread with less drift.

Opus 4.7 is rolling out gradually with experimental support to a subset of Kiro Pro, Pro+, and Power customers logging in with AWS IAM Identity Center in the AWS US-East-1 (Northern Virginia) and AWS Europe (Frankfurt) regions, with cross-region inference support and broader availability to follow. It ships with the full 1M context window, 2.2x credit multiplier, the same as Opus 4.6.

Download Kiro or restart the app or CLI to check for the latest available models.